Home Lab NAS/SAN Shoot-Out Part 1

17th April 2011In this new NAS\SAN Shoot-Out series I will be testing available NAS\SAN solutions out there for those of you wanting a free\cheap home based Lab NAS\SAN solution for your vSphere labs.

I will be providing the testing results of the following software solutions:-

Iomega IX4-200D

Openfiler (2.99)

FreeNAS (7.2 and 8.0RC5)

NexentaStor (3.0.4)

OpenIndiana (Build 148 – using napp-it)

Oracle Solaris 11 Express (using napp-it)

Open-E DSS (6)

Part 1 of a multi part series has the results for the IX4 and Openfiler (2.99). The test environment was changed slightly from my previous Performance Testing post, I carried out further testing because the initial testing was slightly skewed due to my testing being carried out on an unaligned disk, the results here were carried out on a VM with a correctly aligned disk. Also tested in this round is a new addition to the HP MicroServer, a Lenovo M1015 (LSI 9240-8) controller card with the Raid 5 Key fitted. Initial testing was going to be based on the HP SmartArray P410 but the card that was being tested kept failing (losing disks from the array at every reboot). I have also increased the amount of ram in the MicroServer from the inital 2gb upto the maximum of 8gb.

Lab Setup

OS TYPE: Windows XP SP3 VM on ESXi 4.1u1 using a 40gb thick provisioned disk (Aligned Disk)

CPU Count \ Ram: 1 vCPU, 512MB ram

ESXi HOST: Lenovo TS200, 24GB RAM; 1x X3440 @ 2.5ghz (a single ESXi 4.1 host with a single VM running Iometer was used during testing, no other VM’s were active during this time).

STORAGE PLATFORMS

Iomega IX4-200d 2TB NAS, 4x 500gb

HP MicroServer, 8gb ram, 4 x 1500gb and various HDD\USB Keys for OpenFiler\DSS\FreeNAS\NexentaStor.

Storage Hardware: Software and Hardware based iSCSI and NFS, utilising an IBM\Lenovo M1015 (rebadged LSI 9240-8)

NETWORKING

NetGear TS724T 24 x 1 GB Ethernet switch

IOMETER TEST SCRIPT

To allow for consistent results throughout the testing, the following test criteria were followed:

1, One Windows XP SP3 with Iometer was used to monitor performance across the platforms.

2, Again I utilised the Iometer script that can be found via the VMTN Storage Performance thread here, the test script was downloaded from here.

The Iometer script tests the following:-

TEST NAME: Max Throughput-100%Read

size,% of size,% reads,% random,delay,burst,align,reply

32768,100,100,0,0,1,0,0

TEST NAME: RealLife-60%Rand-65%Read

size,% of size,% reads,% random,delay,burst,align,reply

8192,100,65,60,0,1,0,0

TEST NAME: Max Throughput-50%Read

size,% of size,% reads,% random,delay,burst,align,reply

32768,100,50,0,0,1,0,0

TEST NAME: Random-8k-70%Read

size,% of size,% reads,% random,delay,burst,align,reply

8192,100,70,100,0,1,0,0

Two runs for each configuration were performed to consolidate results.

Iomega IX4-200D

If you take the time to look at various Home Lab blogs out there you will see that the Iomega IX4-200D is a great little unit thats used by a great deal of people, it has it’s problems however. There are people out there however who aren’t great fans of the device, whether it’s down to performance or reliability however it must be said that with the device being on the VMware support list for vSphere makes it a valuable device for homelabs. I wondered however if the device could match up to some home-brew solutions.

The specs of the IX4 make it semi-comparible with the low spec HP MicroServer (apart from the ram side of things obviously) so it was always going to be interesting to see how it compared.

Specifications

LAN standards: IEEE 802.3, IEEE 802.3u

IX4-200D Testing Results

Openfiler 2.99

Having read a number of posts where people were saying that there wasn’t much of a difference between hardware and software raid I wanted to see for myself if I could see any improvement, unfortunately the M1015 isn’t a supported controller under OF 2.3 so I had to see if I could find a solution for it. digging deeper at the OF Community Forums I eventually found links to the rPath Repository where the OF 3 Beta’s are stored, a download of the OF 2.99 beta was installed onto the MicroServer and testing was carried out, during testing however OF release a final copy of OF 2.99 and retesting of the software was carried out, luckily for me the M1015 (LSI 9240) driver was installed during the installation process. One of the things I am not keen on with OF is the lack of ‘supported’ USB host, whilst it’s possible to hack it to work it’s not something that’s supported out of the box so that meant that I had to have an additional hdd installed into the server to house the OF installation.

I installed OF 2.99 onto a WD Velociraptor 150gb hdd for not other reason that it was on a pile of disk, the installation found my M1015 adaptor card without issue so testing was carried out using both hardware and software raid. Due to the limitations on the hardware card I could only tested Raid 0,1 and 5 whereas under software raid I was able to test Raid 0,1,5,6 and 10.

It should be noted that there is an issue with the LVM and certain raid set creations simply not working, even with the new release last week of OF 2.99 this hasn’t been fixed (I have been testing OF 2.99 beta for a couple of weeks and ran into this problem earlier which although a workaround is available, this hasn’t been resolved on the 2.99 final release).

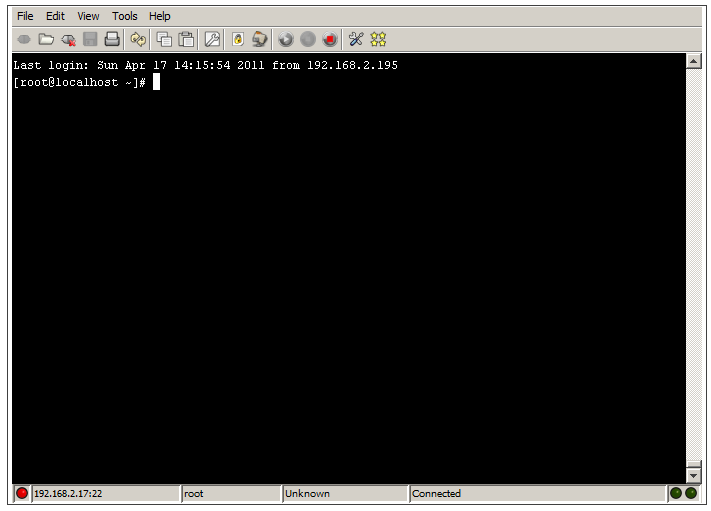

If you are going to be installing OF 2.99 and discover that you’re not able to create soft RAID 1 arrays then the following will resolve that for you.

Open up a secure console

On the OpenFiler Console, click on System.

Then click on Secure Console.

Choose your preferred connection profile (default is as above), click connect.

Enter the root password (created during the installation process) and this will launch the Secure Shell.

Now you need to type the following commands into the shell.

conary update mdadm=openfiler.rpath.org@rpl:devel/2.6.4-0.2-1

This will install the correct mdadm files, once installed type the next command in.

ln -s /sbin/lvm /usr/sbin/lvm

This ensures that the GUI is populated correctly.

This will then allow you to create all of the Software Raid types correctly.

Openfiler Hardware Raid Controller Testing Results

Openfiler Software Raid Testing Results

Openfiler Hardware\Software Results

We can see that actually having a hardware controller (in this case a Lenovo M1015) did’nt help with performance, infact in most of the tests software raid actually performed better than the hardware controller.

As far as usability is concerned Openfiler is one of the solutions that’s been around for a long time, infact the release of OF 2.99 this week is a huge step forward for the Openfiler community in as much as this came out of the blue after a number of complaints on the forums for no perceived up-coming releases (it’s been known that OF 3.0 is due, but has been rumoured for the last 9 months).

In part two of this series I will be discussing solutions using FreeNAS and Open-E, come back soon.

hmmm nice write up… do you think maybe your bottleneck is your switch? have you thought about getting a switch that supports port aggregation? I m in the process of getting my lab equipment, so if you havent already tried my recommendation, I will test and write you a note of my findings.

Cheers!

Thanks for your work.

Thanks for putting this together. Any plans to include SoftNAS in your future testings? Seems to me that it’s very interesting too.

[…] […]

Switch bottleneck was my first thought on this as well, when I saw the 100 MB/sec speeds. Have you tested using either 10gb ports or something with port aggregation or etherchannel?