Unfortunately whilst I really wanted to like FreeNAS 8 I can’t, because it’s so flaky. What you ask??

I installed FreeNAS 8 yesterday morning and carried out some performance testing yesterday, it wasn’t particularly strenuous testing, infact it’s the same testing that I have been doing to all of the other storage solutions so far.

The testing I did yesterday was the same test carried out for and by Vladan.

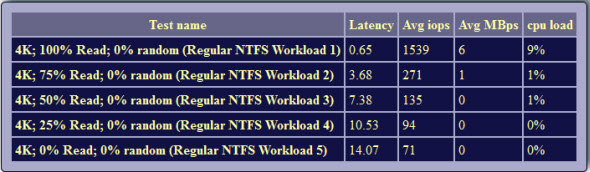

First of all here are the results of my iSCSI testing using ZFS.

As you can see, the iSCSI results are better than the NFS results that were achieved over at Vladan.fr

So I had really great hopes for FreeNAS 8, obviously NFS performance isn’t anywhere near the Open-E DSS v6 product but the iSCSI performance wasn’t so bad.

In wanting to continue with my own testing regime I started running my own tests this morning, the idea being that I would use these tests in part 3 of my NAS/SAN Shootout series, unfortunately 45 minutes into my testing I suddenly had a machine that refused to respond to any commands, further checks showed that the iSCSI datastore was now inactive whilst the NFS datastore was still online, the FreeNAS web console was also still active and allowing me to browse all of the different sections on the site, nothing unusual appearing on the site.

A reboot was initiated from the web console and a persistent ping initiated from my desktop. Unfortunately whilst the server responded to pings after a couple of minutes, the web console itself took over an hour before it would initialise, not only that but that still left me with issues with the datastores. My iSCSI datastore was reinstated at reboot, unfortunately my NFS datastore now appears as inactive and unfortunately that’s the final straw for me.

FreeNAS 8 is not ready for Release status, there are still far too many issues that people are experiencing with this product, there are feature sets missing from it (admittedly not things I need or require) that people are crying out for (and apparently being scheduled for FreeNAS 8.1 in the future). What with the unreliability of the product during light testing (a single VM running IOmeter SHOULDN’T bring the host\datastore down) and the fact that a remote reboot doesn’t restore all of the functionality expected I really do have to advise people to use FreeNAS 8 at their peril.

Comments

9 responses to “FreeNAS 8 isn’t ready or reliable”

It seems that the product is not ready yet, even if it went out of beta.

It’s a shame because I really like the easy install and setup, with some enterprise class features like thin provisioning and snapshots.

Fully agree its not ready for production to many issues with it and the forums people are complaining about it non stop performance issues.

I have a HP microserver sitting here begging me to install either openfiler onto it or freenas.

Put freenas onto it yesterday after a hour or testing and tuning and re-tuning I could not get any decent speeds out of it.

Might have a look at installing openfiler on it this evening failing that it will be whs2011 with starwind iscsi on it…

Actually have a look at Open-E DSS v6, it looks like Vladan may well be going down that route now, certainly from an NFS performance stand point it’s far better than Openfiler is.

Yes, there are still some (little) bugs in the FreeNAS interface.

But I must say FreeBSD 8 / ZFS is globally stable.

You’re right about performance, but I feel it could be linked to FreeBSD drivers SATA / NIC optimizations.

Exposing a CIFS file system based on a in RAIDZ (ZFS) pool built on a 6×2 TB container,

I experimented the following performance from quad-Core i7 @ 3.33 GHz client running Windows 7 x64:

Using a D525 (atom 1.8 GHz dualcore configuration), I reach : 46 MB/s (Read) and 22 MB/s (Write) performance.

Using a Core i5 660 (3.3 GHz dualcore configuration), I reach : 90-95 MB/s (Write) and 46 MB/s (Write) performance.

Due to the power of the Core i5, I would normally expect 100/100 for read/write, even using Raid5 ;

But the network usage graph shows a wavy activity, meaning that there are “holes” when no transfer occurs (in read and write modes).

I don’t know where this comes from or if I’m the only one to experience such a goofy behavior from my FreeNAS box.

I manage with that (ZFS it’s very stable and reliable for more than 2 months now), but I would be happy to get better perfs.

What about you ?

Denis

I’m looking to turn my HP micro server into a NFS data store to use for VMware backups using their data protection appliance product. I too had very disappointing results with FreeNas 8 NFS performance to the point where I don’t think I can use it and was thinking about Nexentastore since its free for 18TB and I’m only using 6 TB (4x2tb hdds). I have never heard of Open-E DSS v6 but will research that as well.

Hi,

I have been through this before using NFS on Freenas since 0.7 versions, the performance is terrible like 2-4 mb/s writes unless you use an ssd disk for the ZIL log (ZFS intent log) if you run an ssh session to the freenas box with the command “zpool iostat zpoolname 1” while you do an nfs transfer, you will notice that the workload is very bursty and bad write speeds..

Now with freenas 8 only supporting installs on removeable media, if you get yourself a good quality ssd drive (about 8 or 16gb should do) you can install to that and the ZIL by default resides on the boot drive so you would have solved your problem..

There’s a good tuning document for NFS and Cifs on freenas that I have used to get better performance out of cifs and NFS, its under evil tuning guide on the freenas main documentation site, plus there are many other settings that have been tried and tested by others..

with freenas 7 I had panics when copying large files via cifs so I had to do some tuning to the KMEM value in loader.conf..

hope that helps any.. i’m going to upgrade (well, rebuild! to 8 shortly so will re-post with new results).

enjoy

/K

I have installed FreeNAs8.02 on P4 machine with 250GB HDD and 1.5 TB HADD. I was able to see teh datasore in my ESX1 5.0 server and was trying to create a VM. After 30 % it showed me as inactive. Why? I tried to do lot of reserch but was not able to find teh reason.

If anyone has any idea how to resolve this issue ples post

I’ve been running 8.0.3 P1 for about 2 months now, and until this past week, performance and reliability have been superb. I use it as a target for VMware Data Recovery for about 24 VMs. All of a sudden, performance has become spotty and it locks up, but not on all volumes. Some volumes lock while others are still operating. Here are my system stats:

FreeNAS Build FreeNAS-8.0.3-RELEASE-p1-x64 (9591)

Platform Intel(R) Pentium(R) CPU G860 @ 3.00GHz

Memory 8156MB

System Time Fri Jun 1 08:55:06 2012

Uptime 8:55AM up 1:55, 0 users

Load Average 0.26, 0.34, 0.34

OS Version FreeBSD 8.2-RELEASE-p6

After being completely happy with FreeNAS, I’m now looking for another option.

Hi Mark,

Can I ask if you’re using NFS or iSCSI? What kind of space are you using?

There are a couple of options out there such as Nexenta Community Edition, OpenIndiana and Open-E DSS (which was great for NFS but has a 2TB limit for the DSS-Lite edition).

I would probably steer clear of OpenFiler as there haven’t been any real updates for over a year (I am not even sure if they have done a respin in that time) and considering that rPath dropped out of the market space as far as lone developers are concerned I would be concerned ifwhere OF is moving to.

There are also providers such as Starwind iSCSI SAN software or even the MS iSCSI software.

Simon